Keep an eye on table metadata growth, especially for tables with frequent updates or schema changes. For example, Monte Carlo can monitor Apache Iceberg tables for data quality incidents, where other data observability platforms may be more limited. There may be integration challenges given that Apache Iceberg is a relatively new format and your storage and compute will be less tightly coupled. You will also want to investigate supporting capabilities as you build out your modern data stack. Popular query engines include Apache Spark, Snowflake, and Trino. They translate queries into operations that can be performed on the data managed by Iceberg. Query engines enable you to process and analyze data stored in Iceberg tables. Integrate with compatible query engines and other solutions See the docs page Structured Streaming for how to do incremental processing with Spark or this Alibaba guide on how to do the same with Flink. Take advantage of this feature for more efficient data processing, especially for streaming data. Iceberg supports incremental processing, in other words reading only the data that has changed between two snapshots. Iceberg supports data compaction to merge small files, which can help maintain optimal file sizes. Smaller files can lead to inefficient use of resources, while larger files can slow down query performance. Optimize file sizesĪim for a balance between too many small files and too few large files. Schema changes can still create challenges downstream in the data pipeline and may require data observability or other data monitoring to fully prevent data quality issues. Notice that even though the name and position of the column have changed, its unique ID (2) remains the same.

The new schema would look like this: 1: order_id (long) Now, let’s say you want to update the schema by changing the name of the “customer_id” column to “client_id” and moving it to the last position. Suppose you have a table called “sales” with the following schema: 1: order_id (long)Įach column in the schema has a unique ID (1 to 5). Here’s an example to illustrate this concept: Make use of this feature to add, update, or delete columns as needed, without having to perform manual data migrations. One of Iceberg’s strengths is its ability to handle schema changes more seamlessly. Remember to regularly monitor the performance of your partitioning strategy and adjust it as needed. This means that users don’t need to be aware of the partitioning scheme when querying the data, enabling more efficient partition pruning and reducing the risk of errors in queries. Iceberg also supports hidden partitioning, which simplifies querying by keeping partitioning abstracted from to users. Bucketing can help evenly distribute data across multiple files within each partition, improving query performance and storage efficiency.

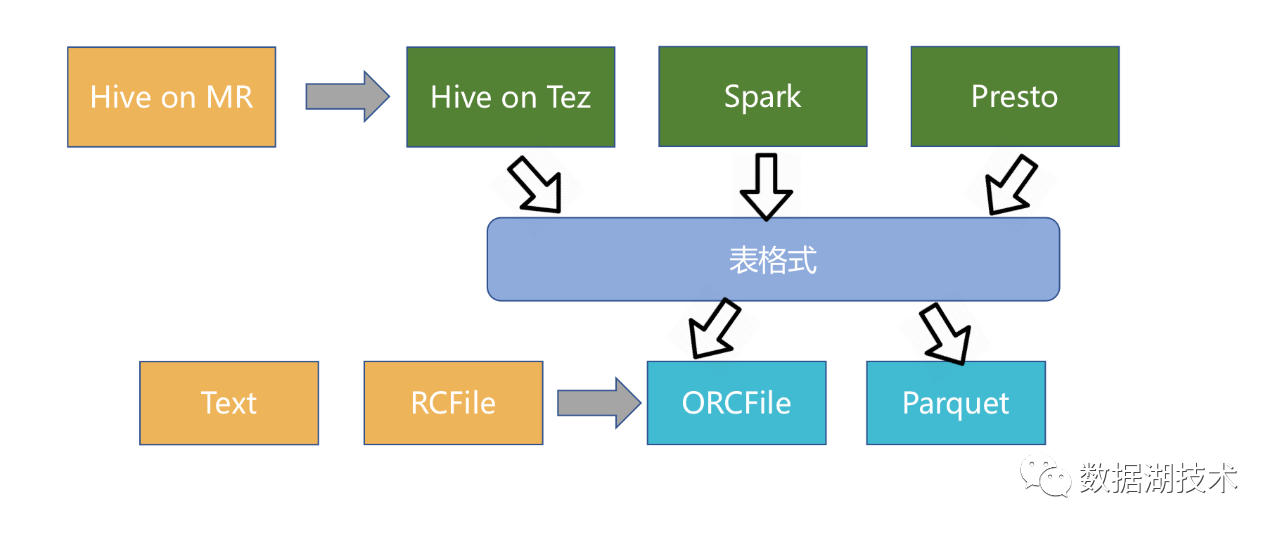

In cases where a single partition column does not adequately distribute the data, consider combining partitioning with bucketing. Avoid using columns with low cardinality or skewed data distribution, as they can lead to an unbalanced distribution of data across partitions. High-cardinality columns with an even distribution of data values are often good choices for partitioning. Partition your tables based on the access patterns and query requirements of your data. Partitioning can significantly improve query performance by reducing the amount of data scanned. They can be used as external tables to meet data residency requirements within a modern data stack as Snowflake recommends, or they can be the foundation of an organization’s data platform, either self-managed or by using a platform such as Tabular.Īs more organizations recognize the value of Iceberg and adopt it for their data management needs, it’s crucial to stay ahead of the curve and master the best practices for harnessing its full potential. Iceberg tables are also incredibly extensible as they support multiple file formats (Parquet, AVRO, ORC) and are compatible with multiple query engines (Snowflake, Trino, Spark, Flink). What makes Iceberg tables so appealing is they can store raw data at scale to support typical data lake use cases, but they also have data lakehouse-like properties as well such as well-organized metadata, ACID transactions, and critical features like time travel. It’s designed to improve upon the performance and usability challenges of older data storage formats such as Apache Hive and Apache Parquet.

Initially developed by Netflix and later donated to the Apache Software Foundation, Apache Iceberg is an open-source table format for large-scale distributed data sets. If you don’t know Apache Iceberg, you might find yourself skating on thin ice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed